New York Times vs OpenAI & Microsoft

The New York Times has sued OpenAI, the parent company of the infamous ChatGPT, and its partner, Microsoft, for copyright infringement in a Manhattan federal court, which could see the company receive billions of dollars in damages. Here, Beyond Corporate’s Molly Hackett and Natalie Kralski look at the use of copyright content to train AI models, and the arguments surrounding publicly available works.

The New York Times has issued the lawsuit over OpenAI’s use of its content to train generative intelligence and large-language model systems, alleging that the companies are seeking to “free-ride” on The New York Time’s investment in its journalism.

In a rebuttal to the lawsuit, OpenAI have argued that “training AI models using publicly available Internet materials is fair use, as supported by long-standing and widely accepted precedents”. A key precedent that OpenAI may refer to is the 2015 judgment in Authors Guild v Google, that allowed Google to scan millions of copyrighted books to create a search engine.

Although The New York Times is the first media outlet to sue OpenAI for copyright infringement, this latest lawsuit follows a succession of similar cases, such as the case brought by seventeen authors, including George RR Martin, against OpenAI alleging “systematic theft on a mass scale” by the company.

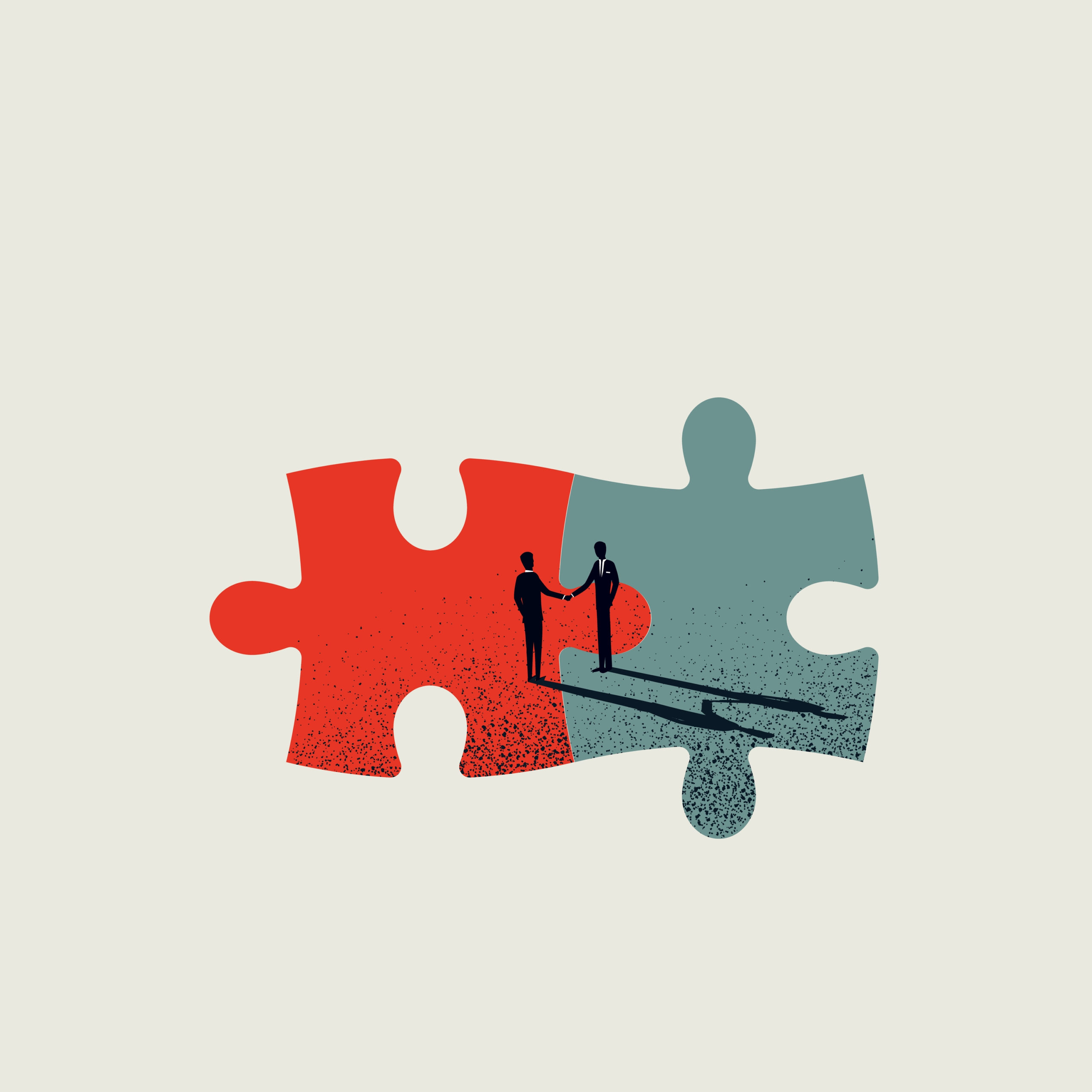

It is reported that the lawsuit was a consequence of a breakdown in negotiations between the companies over the use of The New York Times’ material. The conflict has come to a head as OpenAI endeavours to agree a series of deals with other news organisations to license their content. Despite the lawsuit, in an attempt to show that it is open to resolution, OpenAI wrote in its blog “we are hopeful for a constructive partnership with The New York Times and respect its long history”.

This case sits against a backdrop of mounting discourse surrounding AI ethics and its legal implications during the past 18 months. Cases such as The New York Times v OpenAI undoubtedly have the potential to fundamentally alter the trajectory of how AI is allowed to operate in the world and may open the floodgates for future legal actions against other AI companies.

The UK position?

Under English law there is copyright infringement where a substantial part of the work (being either artistic, literary, musical or dramatic work) has been copied by a third party. In this case, OpenAI software has allegedly copied the invaluable literary works of the New York Times, whilst performing the software’s primary function of learning development and mining its database (ie. the internet!) and regurgitating relevant information for the benefit of its user.

AI is trained on vast amounts of datasets, and as concerned in The New York Times v OpenAI case, such datasets will very likely contain work protected by third party copyright. This is clearly the way in which many AI developed software platforms have been operating since their inception and such practice has been widely acknowledged as being problematic with intellectual property rights.

Whilst the UK introduced a specific copyright exception for text and data mining (TDM) it will not always apply in circumstances of AI development / use and relies upon the purpose for TDM being for non-commercial research only.

AI is no doubt playing an increasing and pivotal role in both technical innovation and artistic creativity, but how will the balance be struck between the protection of human works and the development of AI works?

The UKIPO has been in talks with creative industry stakeholders and AI companies to broker a deal relating to the use of copyright content to train AI models, whilst trying to respect copyright and individual rights holders – being an AI / copyright Code of Practice. Perhaps with no surprise, given the complexity and size of the task at hand, talks have broken down and the establishment of such a Code has been shelved. Rights holders argue that AI practices are infringing copyright on a systematic scale, whereas AI companies submit that conducting TDM on publicly available and accessible works should not require a licence, given it does not amount to copyright infringement and only serves the purpose of training and development.

The divergence in opinion highlights the complex balance that the government will need to reach between protecting the creative industry whilst allowing the growth and innovation of AI.

If you have any questions or require any further advice on this topic, get in touch with our specialist teams today at hello@beyondcorporate.co.uk

[This blog is intended to give general information only and is not intended to apply to specific circumstances. The contents of this blog should not be regarded as legal advice and should not be relied upon as such. Readers are advised to seek specific legal advice.]